I’ve been using an AI tool called Walter to write product and service reviews, but I’m not sure if what it generates really sounds human or still comes off as obviously AI-written. I’d like feedback from people who know about AI content or write reviews themselves: what should I change in my prompts or editing process so these Walter AI reviews read as natural, trustworthy, and human to both readers and search engines?

Walter Writes AI Review

I spent an afternoon messing around with Walter Writes AI and the results were kind of all over the board.

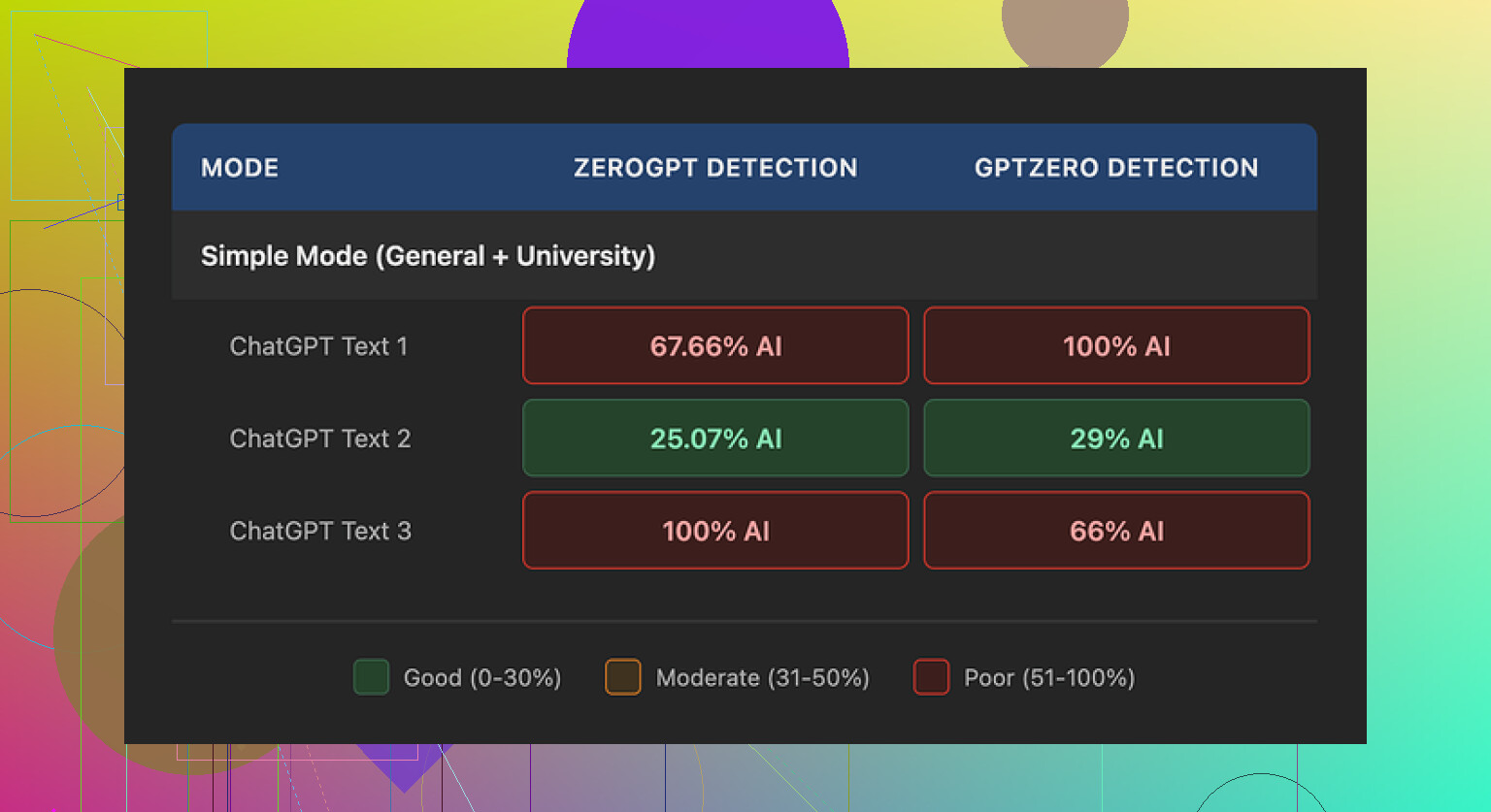

I ran three different samples through it, then checked each one on a couple of detectors:

- GPTZero

- ZeroGPT

One of the samples looked decent. GPTZero tagged it at 29% and ZeroGPT at 25%. For a free-tier run, that is better than what most “AI humanizer” tools give you without a subscription.

The other two samples were a mess. Both of them hit 100% AI on at least one of the detectors. Same tool, same Simple mode, same session. Output quality hopped around a lot, which makes it hard to trust if you need consistent results.

To be fair, I only had access to the free Simple mode. The paid plans unlock “Standard” and “Enhanced” bypass options, so there is a chance those do better against detectors. I did not pay for those, so I cannot say how much of a jump you get.

Once I stopped staring at the scores and started reading the text as a human, a few patterns stood out.

-

Weird semicolon addiction

It kept dropping semicolons where a normal writer would use a comma or split the sentence. It felt like someone trying to sound formal after skimming a grammar blog. -

Repeated words

In one sample, the word “today” showed up four times in three sentences. Same position, same tone. It started reading like a template. -

Copy-paste style parentheticals

It loved parentheses with examples, like “(e.g., storms, droughts)”. That exact pattern repeated across the piece. It reads like a textbook, not like a person talking.

If you are trying to avoid AI detectors, those sorts of patterns do not help, even if the scores look fine on one specific test.

Pricing and limits

Here is what I noted from their pricing page:

-

Starter plan

- From $8 per month if you pay yearly

- 30,000 words per month

-

Unlimited plan

- From $26 per month

- Still caps each submission at 2,000 words per run

So “Unlimited” is not truly unlimited usage in one go. You need to chunk bigger texts into smaller parts, which slows everything down and introduces style shifts between chunks.

Free tier

- Total allowance of 300 words

- Enough for quick tests

- Not enough if you want to process longer articles, essays, or reports

Policy stuff that bothered me

Two things made me step back before adding a card:

-

Refund language

The refund section had aggressive chargeback wording, including threats of legal action around disputes. That might never be enforced, but the tone alone made me uncomfortable. -

Data retention

I could not find a clear, detailed statement on how long they keep your text, where it is stored, or how it is used for training. For anything sensitive, that is a dealbreaker for me.

What I ended up using instead

After bouncing around different tools for a while, I landed on Clever AI Humanizer. On my tests, its output read more like something a normal person would write, and I did not have to pay or create an account.

You can try it here:

For more context and walk-throughs, these helped:

-

Humanize AI (Reddit tutorial)

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/ -

Clever AI Humanizer review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/ -

YouTube video review

https://www.youtube.com/watch?v=G0ivTfXt_-Y

If you are deciding between these tools, I would start with Clever on a longer piece of your own writing, then run both through GPTZero and ZeroGPT. Look at the text, not only the scores. The patterns in phrasing tell you more than one single percentage.

Short answer, no, Walter’s output usually does not pass as human if someone knows what to look for, even if detectors sometimes give you a “low AI” score.

I agree with a lot of what @mikeappsreviewer saw, but I would focus less on detectors and more on how it reads to a picky human.

What I see most often in Walter style text:

-

Rhythm and sentence flow

Reviews from Walter tend to have a flat rhythm. You get medium length sentences in a row, same tone, same “review voice.” Human reviewers mix things more. Short punchy line. Then a longer thought. Then a side comment. AI stuff keeps one tempo. -

Overexplaining simple stuff

For product or service reviews, Walter likes to overdefine obvious things. For example, instead of “Shipping was slow,” you get “The shipping experience was somewhat slower than expected, which affected my perception of the overall service.” Real users do not talk like that on Amazon or Yelp. -

“Balanced” tone even when negative

People who are annoyed do not write balanced corporate reviews. They repeat themselves, they rant a bit, they go off-topic. Walter keeps trying to sound fair and calm. That screams AI to anyone who reads a lot of user reviews. -

Lack of “stakes” or specifics

A human review often has something at stake. “I needed this for my job,” “Gift for my mom,” “I use this every day for school.” Walter tends to stay generic. “This product is helpful for many users” instead of “I use this on my night shift.” -

Word choice “tells”

You might not get the exact semicolon thing every time like @mikeappsreviewer, but you see similar quirks. Unusual formal words dropped into casual text. Reusing phrases like “overall experience,” “from my perspective,” “on the other hand.” Humans repeat, but not in that fixed pattern.

What I would do if you want your Walter reviews to sound more human:

-

Add your own “anchor details”

After Walter writes the review, add 1 or 2 specific details from your real life use.

Examples:- Mention when and where you used it.

- Mention a small annoyance that is too specific for AI to guess, like “the lid catches on my backpack zipper.”

- Mention how you found the product, “Saw it on a TikTok ad” or “Coworker recommended it.”

-

Break the structure on purpose

Walter likes clean intro, body, conclusion. Mess that up a bit.- Move a complaint to the top.

- Add a one word sentence like “Annoying.” or “Solid.”

- Drop one short offhand comment in parentheses that sounds like you, not like a help article.

-

Shorten and “deformalize”

Go through and kill weak filler like “overall,” “in terms of,” “when it comes to.”

Swap phrases.- “The overall experience was positive” → “I liked it.”

- “I would recommend this product to others” → “I would buy it again.”

-

Inject conflict or emotion

Even in positive reviews, humans mix pros and cons.

Example, from AI style to human style:- AI: “The customer service team was helpful and responsive.”

- More human: “Support answered my email in a few hours, though the first reply felt copy pasted.”

-

Do not trust one detector

Detectors disagree a lot. Use them as a rough signal, not as a gate.- If text is marked high AI but you like how it reads, keep it and humanize a few lines.

- If text is marked low AI but reads stiff, fix the stiffness first.

If your goal is to reduce AI “vibe,” Clever AI Humanizer is worth trying, especially if you run your own draft through it. I would not rely only on it or on Walter though. Best combo in my experience:

Walter to draft

Clever AI Humanizer to rough-humanize

You edit the final version for details, emotion, and small imperfections

Also, leave a few harmless typos or slightly weird commas in, like humans do. Not every sentence has to be perfect.

Short version: no, raw Walter stuff doesn’t really pass as human to anyone who reads a lot of real reviews, even if you occasionally “beat” a detector.

I’m with @mikeappsreviewer and @voyageurdubois on most of their points, but I think people are over-fixating on semicolons and sentence length. Those are symptoms, not the core issue.

The big tells I see with Walter:

-

It doesn’t sound like a person with a reason to write

Real reviews usually come from:- Someone excited they found a great thing

- Someone annoyed because something broke or was late

- Someone trying to warn or hype other buyers

Walter reviews often sound like a school assignment. They describe, they “evaluate,” but they rarely feel like “I’m writing this because I care about X.”

-

Time and money basically don’t exist

Humans talk about time and money constantly in reviews:- “Took 6 days instead of 2”

- “For 40 bucks I expected better”

- “I’ve been using it for 3 months”

Walter tends to gloss over that or make it generic. Add those yourself if you keep using it: exact price you paid, when you bought it, how often you use it.

-

No real friction with the brand

Real people mention tiny frictions:- “Had to reset it twice”

- “Instructions sucked, watched a YouTube video instead”

- “Their chat bot ignored my question”

Walter’s “cons” are usually very balanced and abstract. That’s the part that screams AI more than any weird punctuation.

Where I slightly disagree with the others: you do not need to run every single thing through multiple detectors. Detectors are flaky and give you false alarms both ways. Use them occasionally as a sanity check, not as the main goal. If it reads like something a bored college student or a ranty Amazon buyer would actually post, you’re 80% there, regardless of a percentage score.

Stuff you can do that wasn’t really covered:

-

Start from your own messy bullet list

Instead of giving Walter the full prompt “Write a detailed review of X,” do this:- Write 5 to 10 messy bullets in your own voice:

- “bought this because my old one died”

- “arrived 3 days late, kinda pissed me off”

- “love the battery, used all weekend”

- “buttons feel cheap”

- Then tell Walter: “Turn these bullets into a short review but keep the casual tone.”

Your own raw details force it into more human territory and reduce that generic “overall experience was positive” stuff.

- Write 5 to 10 messy bullets in your own voice:

-

Give it an attitude profile

Most Walter reviews sit in polite-middle mode. Try prompts like:- “Write this like I’m mildly annoyed and a bit sarcastic”

- “Write this like I’m trying to convince my friend to buy it”

- “Write this like I’m venting after a long day”

Then you skim and dial it back yourself. That emotional stance matters more than word choice.

-

Intentionally break logic a tiny bit

Humans backtrack and contradict themselves in small ways:- “At first I hated the interface, but now I’m kinda used to it.”

- “I said I wouldn’t buy again, but honestly if it’s on sale I probably would.”

Walter tries too hard to be logically clean. Toss in one or two believable mini-contradictions when you edit.

-

Embed small “offline” references

AI tools often avoid stuff like:- “My neighbor recommended this after his broke”

- “Saw this at Target first, then grabbed it cheaper online”

- “Using this on my night shift in a hospital”

Those kinds of details ground it in a real life context detectors can’t easily guess from the product listing.

On tools: if you want something to help “de-robot” the text, Clever AI Humanizer is worth a try. Not as a magic “make it human” button, but as one step in a pipeline:

- Walter for the structured draft

- Clever AI Humanizer to knock off the stiff edges

- You for the real-life bits, small frustrations, money/time details, and imperfect phrasing

If you do that, you’ll get reviews that are way closer to normal user content than what Walter gives by default. And honestly, even one or two rough edges and oddly placed commas from your own typing help more than any “bypass AI detector” marketing claim.

So, if your question is “Does Walter sound human out of the box?” I’d say: not really.

“Can you make it pass as human with some hands-on editing and maybe Clever AI Humanizer in the mix?” Yeah, that’s actually realistic.

Short version: Walter can be useful as a drafting tool, but on its own it rarely feels like a real person who just bought a thing and has feelings about it. I’m mostly with @voyageurdubois, @viajeroceleste and @mikeappsreviewer, with a few tweaks.

Where I slightly disagree: I think people are over-correcting for the “AI vibe.” If you scrub too hard and over-engineer your prompts, you end up with a different kind of fake, like a human trying to impersonate an AI trying to impersonate a human. Better to lean into your own voice and use Walter as scaffolding, not the finished wall.

A few angles that haven’t really been covered:

1. Device & context fingerprints

Real reviews leak context without trying:

- “Typed this on my phone on the bus so sorry for typos”

- “Writing this after a 12‑hour shift”

- “Using this on a Windows laptop with two monitors”

Walter reviews usually float in a vacuum. Add one or two casual context clues and you instantly break that “generic review blog” vibe. This matters more than whether a detector says 23% or 72% AI.

2. Opinion density vs explanation density

Walter often has a low ratio of opinions to explanations. Lots of “this feature allows you to…” and not enough “this is actually annoying / great in practice.” Humans invert that: quick description, strong judgment, maybe a story.

When you edit, try to:

- Cut one explanatory sentence for every extra line of opinion you add.

- Add 1 or 2 “hard” judgments: “Hated this part”, “Surprisingly good”, “Not worth full price.”

That alone moves it closer to human than another round of rephrasing.

3. Consistency with your other reviews

Detectors do not know your history, but sites sometimes do. If all your past organic reviews are short, messy, and a bit emotional, then suddenly you drop a polished 600-word “overall experience” essay, it stands out.

Strategy that works better than obsessing over Walter’s default output:

- Decide your “persona” once: short & blunt, or medium length & chatty.

- Force Walter to stick to that by literally pasting one of your old real reviews and saying “Match this tone and length.”

That trims a lot of Walter’s stiffness before you even touch the text.

4. Use humanizers sparingly, not as a crutch

Clever AI Humanizer is handy, but not a magic disappear‑the‑AI button. Think of it like a style filter in the middle of your workflow, not the endpoint.

Clever AI Humanizer: quick pros & cons

Pros:

- Often breaks the “corporate review” cadence and adds more casual phrasing.

- Can smooth out obvious Walter-isms like “overall experience” spam without you rewriting from scratch.

- No account requirement is nice if you are just experimenting.

Cons:

- It can over-casualize and sometimes flatten whatever personal tone you already had.

- Still predictable if you rely on it alone; you get a “Clever flavor” instead of a “Walter flavor.”

- You still need to layer in real details (time, price, context) or it remains generic, just in different words.

Used like this, it works well:

Walter draft

→ Clever AI Humanizer for a first de-robot pass

→ Your 3–5 minute edit for: money/time mentions, small frustrations, context leaks, and one or two “imperfect” sentences

Compared to what @voyageurdubois and @viajeroceleste said, I’d worry less about gaming multiple detectors and more about building a consistent, slightly flawed style that you can reproduce across reviews. Walter and tools like Clever AI Humanizer are fine helpers, but the parts that actually convince picky humans are almost always the tiny, boring specifics that only you can add.