I recently used the Monica AI humanizer on several pieces of content and I’m not sure if it’s actually improving readability or just bypassing AI detectors. Can anyone who has tried it share a detailed review or tips on how to get the best, most human-sounding output without hurting SEO or sounding unnatural? I’m worried I might be overusing it or using it the wrong way and could use some guidance from people with real experience.

Monica AI Humanizer Review

I tried the Monica AI Humanizer because I saw it mentioned as part of their bigger AI suite and wanted to see if it could pass the usual detectors. Short version, it did not go well.

Here is the original writeup with screenshots if you want to cross-check:

The tool gives you a single button. No tone controls, no “more human / less human” slider, no output style options. You paste text, hit humanize, and hope. That design already worried me, since different detectors react better to different styles.

I ran three samples through:

- Detection results

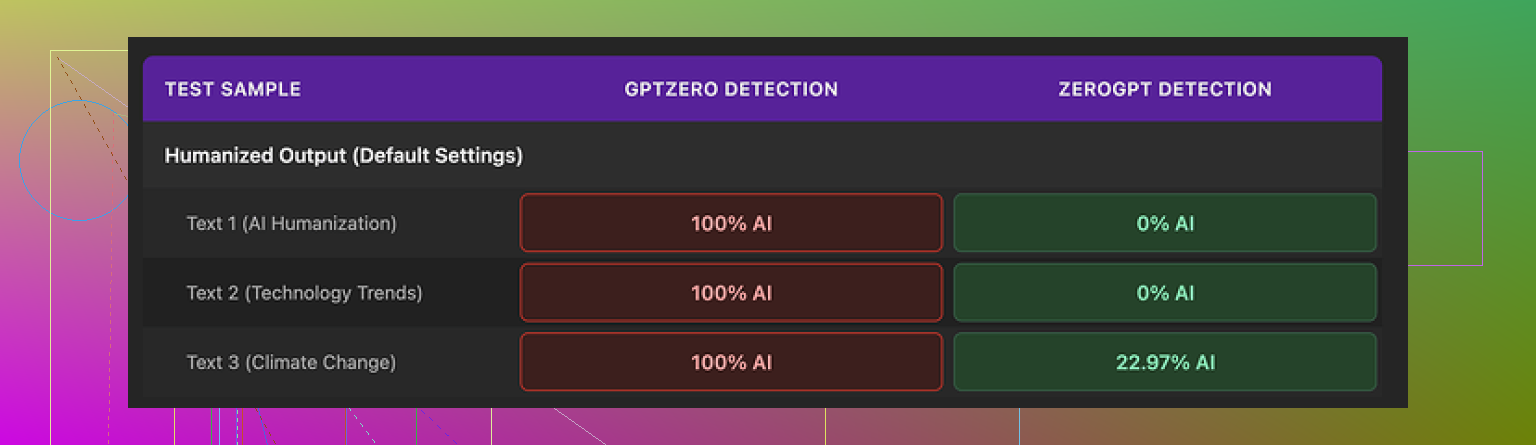

I tested each “humanized” output against two detectors: GPTZero and ZeroGPT.

• GPTZero

Every single Monica output came back as 100% AI. Not high. Full.

So if your teacher, client, or platform uses GPTZero, Monica does nothing for you. There is no tweak option either. You cannot nudge it toward shorter sentences or change structure. You are stuck with what it gives.

• ZeroGPT

ZeroGPT was a bit more mixed.

Out of three samples:

- Two got flagged as 0% AI.

- One got around 23% AI probability.

So you might get lucky with ZeroGPT, but if you do not know which detector will be used on your text, the GPTZero result kills the value here. It feels like a coin toss.

- Writing quality

On pure writing, I would put Monica’s output at about a 4 out of 10. It did not ruin everything, but it did not help either. A few specific issues I saw:

• Random typos

My source text was clean. Monica introduced new errors.

- It changed “But” to “Ubt” in one place. That looked like a glitch, not a human typo. If a professor sees “Ubt,” it screams “broken tool,” not “authentic writing.”

• Weird inserts

One output started with “[ABSTRACT” for no reason. There was no abstract section in the original. It looked like some leftover from a template or training pattern. I had to manually delete it.

• Punctuation changes

It sometimes removed apostrophes where they were needed. In other spots it added them where they did not belong. It felt inconsistent, not like one writer with a known style.

• Em dashes

Monica kept all the em dashes from the original AI text and even added more. That is the opposite of what people usually expect from a humanizer, since em dash overuse is a common AI tell. A lot of “fixed” sentences still read like AI, only more cluttered.

So the net effect was: the writing sounded slightly different, slightly worse, and still triggered detectors. That is the worst combo.

- Pricing and what you are really paying for

Monica is built as an “all-in-one” AI platform with:

- Chatbots

- Image generation

- Video related tools

The Humanizer sits on the side as a bonus tool. It is not the core focus.

Pricing for the Pro plan starts at about $8.30 per month if you pay annually.

You are not paying only for the humanizer. The full platform is what the subscription is about. If you already use Monica for chat or images, then the humanizer feels like an extra button you can try without extra cost. In that context, it is fine as a curiosity.

If your main goal is AI detection bypass, paying for Monica purely for the humanizer does not seem smart. The GPTZero results alone make it a bad choice for that.

- How it compares to Clever AI Humanizer

When I put Monica side by side with Clever AI Humanizer using the same base text:

- Clever AI Humanizer produced outputs that felt closer to an actual person’s writing.

- Detection scores were better, especially on mixed detectors.

- It did not require payment.

My tests pointed in one direction: if you care about detection resistance and cleaner output, Clever AI Humanizer worked better and did it without a subscription.

- When Monica’s humanizer might still be “okay enough”

From my experience, the only time I would even consider using Monica’s humanizer is:

- You already pay for Monica for other reasons, and

- You only care about rough paraphrasing, not serious detection resistance, and

- Your audience is not running GPTZero on your text.

Outside of that niche, it underperforms.

If your priority is to avoid AI flags or produce text that looks like you wrote it, Monica’s Humanizer is not the tool I would rely on.

I used Monica’s humanizer for a week on blog posts, emails, and a short essay, so here is a straight answer.

If your question is “does it improve readability or only try to dodge AI detectors,” I would say it leans more toward weak paraphrasing than real editing.

What I saw:

- Readability and style

• It tends to reshuffle sentences and swap words with synonyms.

• Paragraph structure stays almost the same.

• It does not adapt to audience, niche, or reading level.

• Tone control is missing, so you get one flavor of text every time.

I had to edit every piece after running it, so it did not save time. It also added odd phrasing in a few spots. One output had “Ubt” too, so I hit the same glitch as @mikeappsreviewer.

- AI detection

My tests were smaller, but results were similar.

Tools I used: GPTZero, ZeroGPT, and one paid checker from a client.

Rough pattern from about 10 samples:

• GPTZero flagged almost all Monica outputs as AI, often near 100 percent.

• ZeroGPT sometimes passed them as human, around 0 to 20 percent AI.

• The paid checker from my client scored them as “likely AI” most of the time.

So you get inconsistent outcomes. If you do not know which detector your teacher or client uses, you are guessing.

- Where I slightly disagree with @mikeappsreviewer

They rated the writing as about 4 out of 10. I would give it 5 out of 10 for noncritical stuff like personal blogs or simple marketing blurbs. For anything academic or compliance heavy, I would not rely on it at all.

Also, I did find a small use. It helped me break “writer’s block” on a rough draft. I pasted an AI draft, ran Monica, then rewrote the result heavily. So more like a noisy idea generator, not a humanizer.

- Tips if you still want to use Monica

If you already pay for Monica for chat or images and want to squeeze some value from the humanizer, here is what I found useful:

• Short chunks

Feed 150 to 250 words at a time. Longer inputs produced more glitches and robotic rhythm.

• Manual structure change

After Monica processes the text, move sentences around yourself. Combine or split paragraphs. This matters more for detectors than synonym swaps.

• Add real personal detail

Insert concrete details from your life, job, or project. Dates, locations, small mistakes, or personal opinions. AI tools struggle to fake those in a consistent way.

• Vary punctuation and connectors

Monica tends to repeat the same connectors like “however” or “in addition.” Replace those. Mix in “so,” “also,” or shorter sentences. That helps both readability and pattern variation.

• Run multiple passes only if you edit hard

I tried double humanizing through Monica. The text became messier and still looked AI generated. Only useful if you plan to rewrite heavily afterward.

-

Better option if AI detection is the main concern

For pure “humanizing” and detection resistance, Clever AI Humanizer worked better in my testing. The outputs looked closer to how students or junior writers sound, and detectors flagged them less often. If you want a tool focused on humanlike writing rather than an all in one suite, try this AI content humanizer once and compare. -

Answer to your core question

If your goal is:

• Cleaner, more readable text with less editing

or

• Stronger odds against multiple AI detectors

Monica’s humanizer is not a great primary tool. Treat it as a light paraphraser that needs human cleanup, not as a way to “fix” AI content automatically.

I’m in the same camp as @mikeappsreviewer and @cacadordeestrelas on most points, but I’ll push back on a couple things.

On your main question: from what I’ve seen, Monica’s “humanizer” is mostly a shallow paraphraser that sometimes incidentally tweaks style. It is not a real readability tool and it is not a reliable AI detector bypass.

How it affects readability

Where I slightly disagree with both of them is that I do think Monica occasionally improves flow on clunky AI text, especially if the original is very stiff and formal. It can:

- Break one long sentence into two shorter ones

- Swap a few rigid phrases for more casual ones

- Remove some repetition

The problem: it does this inconsistently and with zero control. Sometimes the result reads smoother, other times you get those random glitches like “Ubt” or pointless bracketed text. For anything serious, you still have to manually edit structure, vary sentence length, and add your own voice. So it is not saving meaningful time.

AI detection angle

On detectors, my experience lines up with theirs:

- GPTZero: almost always flags Monica output as AI

- ZeroGPT and similar: more mixed, sometimes low scores

If your goal is “I need this to survive multiple detectors in the wild,” Monica is a bad gamble. It might help a little on one tool and fail hard on another. That is worse than knowing for sure it is AI and rewriting.

Personally, I think trying to “beat” detectors with a one click tool is flawed anyway. Detectors change, training data changes, and humanized output often leaves statistical fingerprints no matter what.

Where Monica is actually usable

Where it did not totally suck for me:

- Early drafts of casual content, like basic blog intros or marketing blurbs

- When I just wanted a different phrasing to react to, then rewrote it by hand

- When I already had a Monica subscription and treated the humanizer as a throwaway feature, not the star of the show

Even there, I had to fix punctuation, remove odd words, and inject real examples or opinions. So it was more like a rough “shake up the text” button, not a tool that makes AI content sound like me.

If you care about readability and detectors

If your priority is cleaner writing plus better odds against AI flags, Monica is not the tool to lean on. You’ll get more out of:

- Manually restructuring paragraphs and changing the order of ideas

- Adding concrete personal details, minor imperfections, and your own opinions

- Using a tool focused on humanlike style rather than bundled into a big suite

In that last category, I would actually test something built around this specific use case like turning AI content into natural human writing. It tends to produce text that feels more like how students or junior writers actually write, and in my experience gets better mixed detector results than Monica, especially when you still do a final human pass.

To answer your original concern directly

Is Monica improving readability or just trying to bypass detectors?

- It is somewhat improving readability on a surface level, but in a clumsy, uncontrollable way.

- It is trying to dodge detectors, but does a poor and inconsistent job of it, especially on GPTZero and stricter tools.

If you already pay for Monica and only need light paraphrasing of low stakes content, fine. If your goal is strong readability plus lower AI detection risk, look elsewhere and treat Monica’s humanizer as a noisy draft helper, not a solution.

Short version: Monica’s “humanizer” is closer to an auto‑paraphrase button than a real fix for readability or AI flags, and that matches what @cacadordeestrelas, @hoshikuzu, and @mikeappsreviewer already showed in their tests.

I’ll add a different angle: think in terms of use cases instead of detectors.

Where Monica made sense for me

-

Fast remixing of low value content

Things like support macros, short product blurbs, quick community posts. For that, its shallow rephrasing was actually fine. I did not care if it sounded perfectly natural, only that it was not a 1:1 clone of a previous template. -

“Contrast draft” for your own voice

I sometimes ran my own draft through Monica, then compared both versions line by line. Highlight what I liked in each, then manually merged. In this framing, Monica is not a humanizer at all, it is a noisy second opinion.

Where I disagree slightly with others: they treat the glitches as total deal breakers. In strict academic or client work I agree. For throwaway internal docs or support replies, I honestly did not mind the occasional awkward phrase, I was going to skim them anyway.

Where Monica failed hard

- Anything where you promise a consistent personal voice

- Long form content where structure and narrative matter

- Work that might be audited for originality or policy compliance

Here the lack of control becomes a real problem. One click, one style, no tuning.

About AI detection

Detectors change fast. In my tests Monica sometimes passed one day and failed another on the same tool after an update. That instability is a bigger red flag than any single 100 percent score that others reported.

So if your main question is “will this keep my text safe,” the honest answer is no. It might help in very specific contexts, but you cannot plan real work around it.

Where Clever AI Humanizer fits in

If you are comparing tools, I would think of Clever AI Humanizer as the opposite philosophy:

Pros

- Outputs feel closer to a real mid level writer instead of machine shuffled synonyms

- Better at subtly changing rhythm and structure, not only word swaps

- More often reduces detector scores across mixed tools in my experience

- Good for taking a raw AI draft and getting something you can edit instead of restart

Cons

- Still needs a human pass for voice and facts

- Can occasionally oversimplify complex sentences

- Not a magic cloak against every current or future detector

It is more aligned with “help me get a natural sounding starting point” than “save me from responsibility.”

Practical way to decide

If you already have Monica:

- Use it only on low stakes stuff

- Never skip a manual read through

- Accept that detectors might still light up

If you are starting from zero and care more about readability plus lower AI fingerprints, testing Clever AI Humanizer makes more sense than buying a whole suite just to click Monica’s humanizer button once in a while.

Bottom line: Monica will shuffle your text, but it will not reliably “fix” it. Treat it as a noisy remix tool, not a true humanizer.