I’ve been using GPTHuman AI and some of the recent responses feel inconsistent or off compared to what I expected. I’m not sure if I’m prompting it wrong, misunderstanding its limits, or missing some settings. Can someone review how this AI is behaving for me, point out what might be going wrong, and suggest how to get more accurate and reliable answers?

GPTHuman AI Review, tested the hard way

GPTHuman site: GPTHuman AI Review with AI-Detection Proof - AI Humanizer Reviews - Best AI Humanizer Reviews

I tried GPTHuman because of that line on their page, “The only AI Humanizer that bypasses all premium AI detectors.” I wanted to see if it held up under normal tools people already use.

Here is what happened when I ran my usual tests.

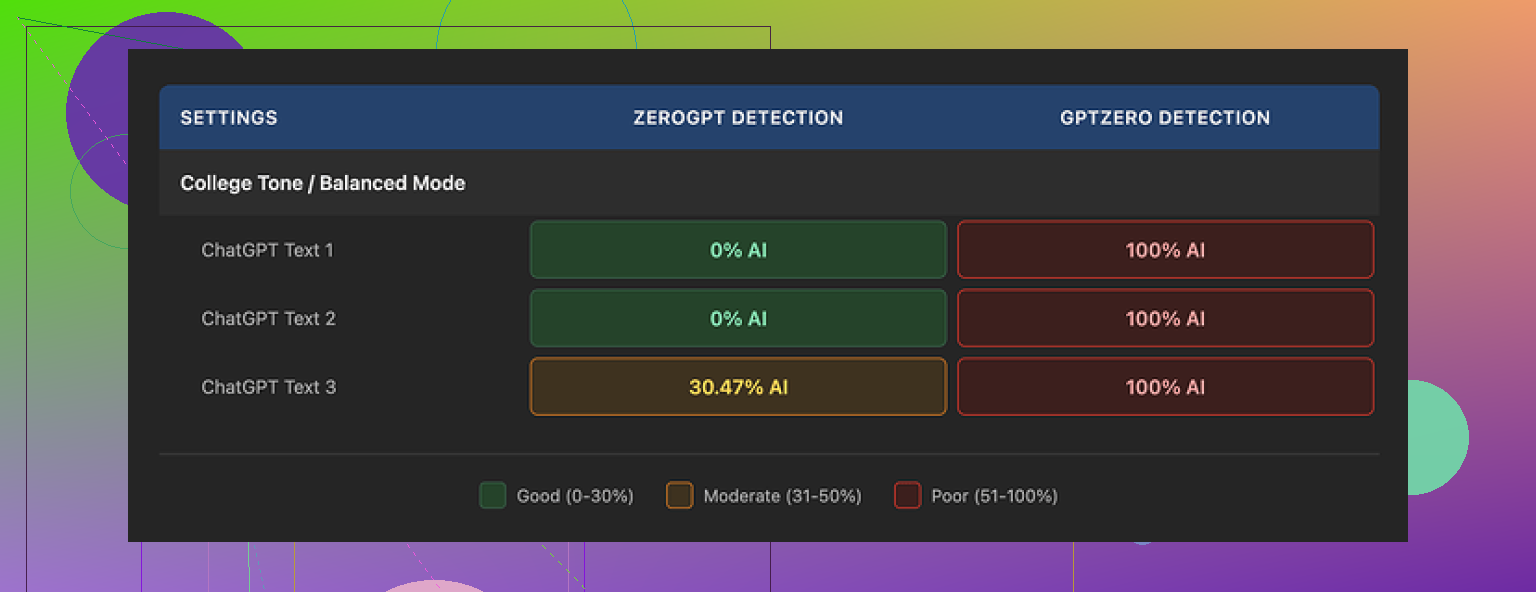

Detection results

I took three different AI-written samples, pushed each through GPTHuman, then ran the outputs through two detectors I use a lot:

• GPTZero

• ZeroGPT

Results:

• GPTZero flagged every single “humanized” output as 100% AI. All three. No partial scores.

• ZeroGPT passed two of the three outputs at 0% AI, but tagged the third one around 30% AI. So not perfect either.

The awkward part is the internal “human score” that GPTHuman shows. Those scores looked strong, like the text should pass detection. They did not match what GPTZero reported at all, which makes that internal meter feel more like a confidence sticker than a useful metric.

Text quality

The structure looked fine at first glance. Paragraphs were clean, output was formatted in a way that did not trigger any visual red flags. Then I read it slowly.

Stuff I kept running into:

• Subject and verb disagreeing in simple sentences.

• Sentences that dropped off or felt half written.

• Word swaps that broke the meaning, for example, wrong preposition or weird synonym that did not fit the context.

• Endings that read like someone stopped mid-thought, almost nonsensical.

So if you feed it into a doc and skim it, you might think it is okay. If your boss, client, or teacher reads it carefully, it will look off.

Here is another screenshot from my tests:

Limits, pricing, and data use

The free tier felt tight.

• Free usage stopped at a total of about 300 words. Not per piece, total across the account.

• I ended up signing up with three different Gmail accounts to finish the same test suite I run on all tools. That alone told me it was not aimed at anyone who needs to process longer drafts.

Paid plans when I checked:

• Starter plan: from $8.25 per month if you pay yearly.

• Unlimited plan: $26 per month, but “unlimited” did not mean unlimited per output. Single runs were capped at 2,000 words.

A few policy points that bothered me more than the price:

• Payments are non-refundable. If you do not like the results, you are stuck.

• Your text is used for AI training by default. There is an opt-out, but you have to look for it.

• They reserve the right to use your company name in their marketing unless you tell them not to. So if you are using this at work, you need to explicitly request removal.

If your use case involves sensitive docs or NDA work, this is the part you should read twice, not the slogan.

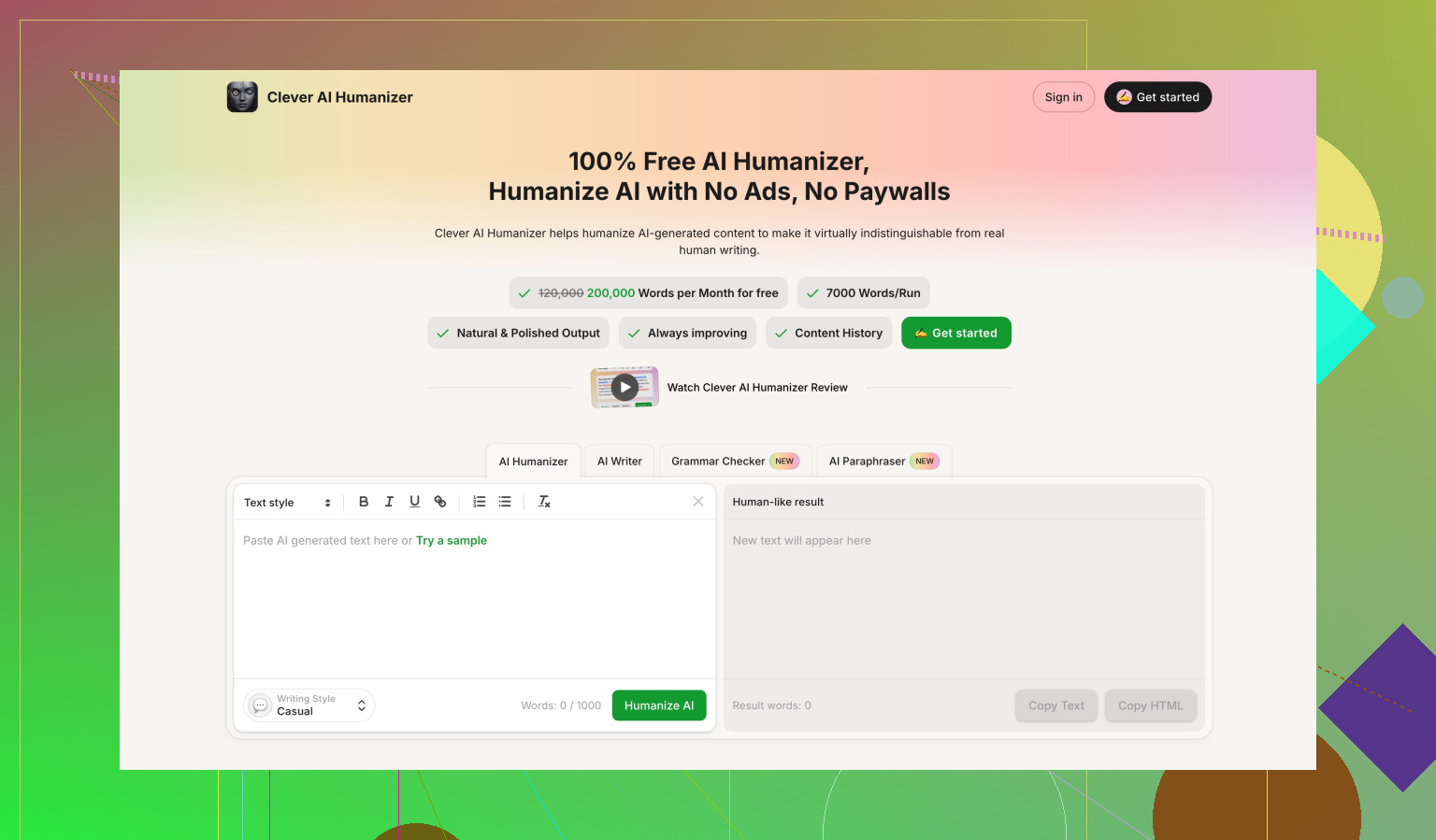

Comparison with Clever AI Humanizer

During the same batch of tests I ran GPTHuman against Clever AI Humanizer using the same input set and the same detectors.

Clever AI Humanizer did better for me in two areas:

• Stronger scores on external AI detection tools across multiple samples.

• No paywall during testing, it was fully free to use for what I needed.

So for my scenario, trying to get AI text closer to human patterns without wrecking readability, Clever AI Humanizer performed stronger than GPTHuman, and it did it without word caps tied to payment.

If you care about bypass rates, cost, and text quality, I would start testing with Clever AI Humanizer first, then only bother with GPTHuman if you have a niche reason or want to see the difference yourself.

You are not prompting it wrong. You are hitting the tool’s limits.

What you are seeing as “inconsistent or off” is mostly how GPTHuman works under the hood, not a setting you can tweak away.

Here is what I would do, step by step.

-

Stop trusting the internal “human score”

Their own score is marketing, not a real detector proxy.

You already saw this gap through your experience.

Treat it as a rough hint, not a signal. -

Shorten your inputs

GPTHuman tends to break grammar and meaning when you push longer chunks.

Try this pattern:

• Split your draft into 150 to 250 word sections.

• Process each section separately.

• Reassemble.

If the output still feels off, GPTHuman is the issue, not your prompt. -

Tighten your prompt style

Avoid vague prompts like “humanize this” alone.

Use more constraints:

• “Keep the original meaning.”

• “Preserve all facts and numbers.”

• “Keep sentence structure simple.”

• “Avoid changing technical terms.”

Then paste the text.

This reduces those weird synonym swaps and broken prepositions. -

Run a quick quality pass yourself

GPTHuman outputs often need a fast manual pass.

Check these items every time:

• Subject verb agreement.

• Prepositions on phrasal verbs.

• First and last sentence of each paragraph.

If you see frequent issues even after tighter prompts and shorter chunks, the tool is not a good fit. -

Use detectors as a sanity check, not a goal

If your goal is “must pass GPTZero every time”, your workflow will feel fragile.

Most detectors have high false positives.

Use two detectors at most.

If one screams AI but the other says low AI, stop chasing a perfect score. -

Compare tools realistically

You saw @mikeappsreviewer’s tests. I agree with most of it, but I would not base my view only on GPTZero and ZeroGPT. Those tools change often and sometimes mark human text as AI.

Still, their results match what you describe: GPTHuman tends to:

• Alter meaning.

• Break flow.

• Get flagged by detectors quite often.

For your use case, you might want to test Clever Ai Humanizer on the exact same inputs and prompts you used with GPTHuman. Use the same:

• Chunk size.

• Quality checklist.

• Detection tools.

See which one gives you fewer edits.

-

When GPTHuman is “enough”

If your needs are:

• Non critical content.

• Low stakes audience.

• Willing to proofread carefully.

Then GPTHuman can still be fine.

If you deal with clients, teachers, or compliance, I would lean away from it and toward something like Clever Ai Humanizer plus a manual edit round. -

Quick test for yourself

Take one paragraph that GPTHuman “humanized” and do three things:

• Read it aloud.

• Check if every sentence keeps the original meaning.

• Run it through a grammar checker.

If you keep fixing basic issues, you are not doing anything wrong. The tool is.

So no hidden setting you are missing.

The best fix is to adjust your process, shorten inputs, tighten prompts, and if it still feels off, start shifting part of your workload to Clever Ai Humanizer and compare how much time you spend cleaning up each output.

You’re not crazy and you’re not “prompting it wrong.” GPTHuman is just… kind of janky right now.

I’m with @mikeappsreviewer on the detection part, but I actually disagree a bit on one thing: I don’t think spending a ton of time tweaking prompts is worth it with this tool. If a “humanizer” needs super-precise instructions and micro-chunking to not wreck grammar or meaning, it’s failing at its core job.

A few angles that haven’t really been covered yet:

-

The inconsistency is baked into its approach

Tools like GPTHuman usually try to:- Randomize word choice

- Disturb sentence structure just enough to confuse detectors

- Inject “imperfections” to look human

That combo almost guarantees: - Occasional nonsense sentences

- Wrong prepositions / odd synonyms

- Off-by-a-little meaning shifts

So when you feel like some outputs are fine and others feel like they were written by a sleep-deprived intern, that’s not you. That’s the randomness dial turned too high.

-

Internal “human score” is mostly vibes

Their internal meter is not aligned with real-world detectors or human readers.

It’s optimized to look reassuring.

If you’re doing client work, academic stuff, or anything with risk, don’t treat that score as meaningful. Use your own read-through + an external grammar checker instead. -

Your expectations vs what this tool actually does

GPTHuman seems more like:- “Make this look a bit different than raw AI text so it might slip past basic filters”

Instead of: - “Produce reliable, clean, humanlike writing that I can use in serious contexts”

If your expectation is the second one, the mismatch will feel like “it’s off” every other run.

- “Make this look a bit different than raw AI text so it might slip past basic filters”

-

Where I’d actually use GPTHuman (if at all)

It’s barely acceptable for:- Low-stakes content: quick social posts, throwaway emails, notes

- Cases where you will definitely do a full manual edit afterward

It is absolutely not great for: - School submissions

- Client-facing content

- Anything legal, medical, or technical

The meaning drift + grammar issues are just too risky.

-

On the settings / hidden switches issue

There’s no magical config buried in the UI that suddenly makes it “good.”

The limits you’re seeing are not from:- Bad prompt phrasing

- Misused options

- Missing a premium setting

They’re from the underlying model + strategy. You can soften the pain with smaller chunks or clearer instructions, like others already said, but you can’t fully fix it.

-

Alternatives in practice

Since you already mentioned things feeling inconsistent, I’d do a straight A/B test:- Take 2 or 3 of your usual texts

- Run them through GPTHuman

- Run the same text through Clever Ai Humanizer

- Compare by:

- How much you have to edit manually

- Whether the meaning stayed intact

- How “natural” it sounds when you read aloud

If you care about searchability and reliability, Clever Ai Humanizer tends to be brought up a lot because people want something that’s both more humanlike and less disruptive to meaning. Not perfect, but the editing workload usually shrinks.

-

Pragmatic workflow suggestion

Instead of spending energy forcing GPTHuman to behave, flip your workflow:- Use your base AI (ChatGPT, Claude, whatever) to get solid, clean text

- If you really need a humanizer layer, run it through something like Clever Ai Humanizer

- Final pass by you for tone & detail

That way the “humanizer” is a light filter on top of already good text, not the thing you rely on to fix everything.

TL;DR:

You’re not missing a secret setting. You’ve just run into GPTHuman’s actual ceiling. If your outputs feel off often enough that you’re second-guessing yourself, it’s probably time to either change tools or demote GPTHuman to “only for low-stakes stuff” and move serious work to something like Clever Ai Humanizer plus manual edits.

Short version: you are not crazy, and you are not going to “fix” GPTHuman with better prompts or hidden switches.

A few angles that complement what @stellacadente, @suenodelbosque and @mikeappsreviewer already covered:

1. Treat GPTHuman as a style filter, not a safety tool

Everyone’s focusing on detectors, but the bigger risk is human readers. A tool that occasionally twists meaning or drops grammar is dangerous if any of this content is:

- Contractual

- Academic

- Technical / instructional

If you keep it only for fluff content (intro paragraphs, social captions, filler copy) and never for core claims or data, the “inconsistent or off” behavior becomes tolerable. Once you expect it to be reliable, it fails.

Here I partly disagree with the idea that chunking and tighter prompts are worth much: if a “humanizer” needs surgeon‑level supervision, it has already lost its main value proposition.

2. Watch for semantic drift, not just grammar

What you describe often comes from the model trying to be “different”:

- Softening strong claims so your argument loses force

- Replacing neutral words with emotional ones

- Flipping polarity slightly (“is not recommended” turning into “can be recommended”)

A quick check that catches this faster than detectors:

- Highlight all numerical values, conditions, and negations in your original

- Compare with the GPTHuman output line by line

If you regularly find drift, that is not fixable by tweaking a slider.

3. Internal “human score” vs real risk

I agree with @mikeappsreviewer that the internal score is mostly vibes, but I would go further: it can be actively misleading. It nudges you to ship text you otherwise would have questioned.

If you keep using GPTHuman at all, I would:

- Ignore the score completely

- Use: your own careful read + a grammar checker + one detector as a sanity check

Once you mentally detach from that “human score,” your judgment of quality gets sharper.

4. Where Clever Ai Humanizer fits in

If you want a different tool in this stack, Clever Ai Humanizer is closer to “light post‑processor” than “heavy randomizer,” which has pros and cons.

Pros:

- Tends to keep meaning more stable when compared side by side.

- Outputs often need fewer grammar repairs.

- Feels less like it is purposely degrading text just to confuse detectors.

Cons:

- It still will not guarantee bypass on every detector, so you cannot treat it as a magic cloak.

- On some texts it can be conservative and not change enough for very strict AI filters.

- You still need a manual pass for tone and subtle errors.

If your priority is “acceptable text that I can clean up quickly,” Clever Ai Humanizer usually makes that cleanup stage shorter than GPTHuman. If your priority is “perfect detector scores,” neither is truly safe.

5. How I would structure a sane workflow

Instead of over‑engineering prompts around GPTHuman:

- Generate clean, clear text with your base model (ChatGPT, Claude, etc.).

- If you worry about “AI‑ishness,” run a single pass through Clever Ai Humanizer.

- Do a manual review focusing on:

- Meaning preserved

- Grammar and flow

- Whether it actually sounds like you

If at step 3 you are repeatedly rewriting whole paragraphs from GPTHuman but only lightly editing Clever Ai Humanizer outputs, that is your practical answer on which tool to keep.

Bottom line: the inconsistent behavior you are feeling is not a user error or missing setting. GPTHuman’s underlying strategy is what produces that “sleep‑deprived intern” vibe some runs have. Use it only for low‑stakes text, or gradually replace it with something like Clever Ai Humanizer and see how much less time you spend fixing things.