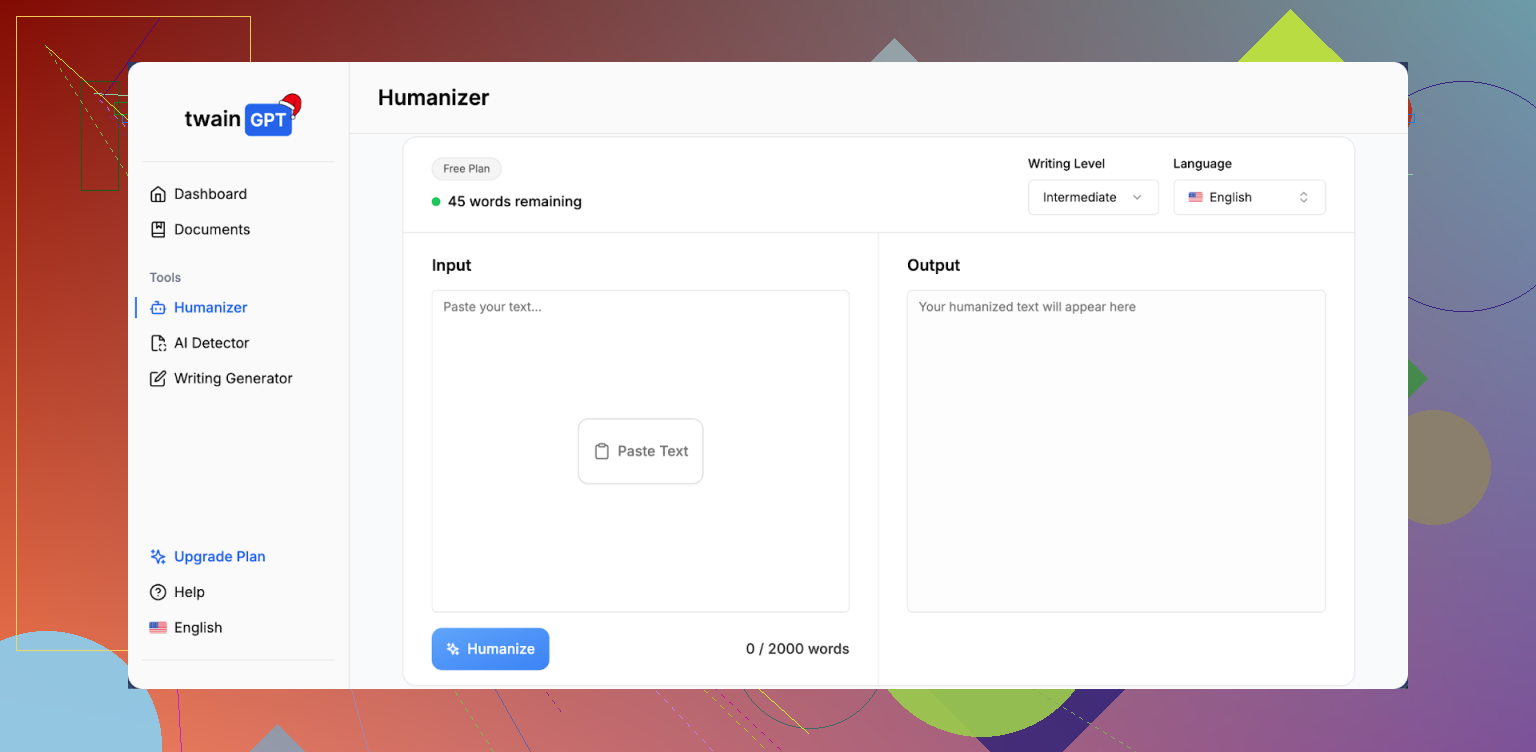

I’ve been testing the TwainGPT text humanizer for rewriting AI content, but I’m getting mixed results and I’m not sure if I’m using it right or if there are better alternatives. Can anyone explain how well it works for passing AI detectors, keeping original meaning, and sounding natural for blog posts and SEO content?

TwainGPT Humanizer Review

I spent some time messing with TwainGPT and ran it through a couple of detectors to see how it holds up in a real check, not in some perfect promo scenario.

If you only care about ZeroGPT, TwainGPT looks great. On three different samples I tried, ZeroGPT reported 0 percent AI every time. No warning flags at all. On paper, that looks perfect.

Then I ran the exact same three TwainGPT outputs through GPTZero. GPTZero flagged every single one of them at 100 percent AI. No borderline calls, no partial scores. Straight 100 percent AI on all three.

So you get this strange situation. If your content lands in ZeroGPT, you look safe. If it lands in GPTZero, you are completely exposed. Unless you know in advance which detector your text will hit, using TwainGPT feels risky.

You can see the original discussion here:

https://cleverhumanizer.ai/community/t/twaingpt-humanizer-review-with-ai-detection-proof/36

Now for how the text reads.

The main trick TwainGPT seems to use is chopping long sentences into shorter pieces. At first that looks fine, then if you read a full paragraph, it starts to feel like a slide deck. Short, blunt lines, odd rhythm, not how people usually talk in full prose.

I kept seeing:

- Run-on sentences that feel stitched together in a hurry

- Strange word choices that do not fit the surrounding context

- Parts of sentences that read like something got removed in the middle

I scored it around 6 out of 10 on writing quality. It does remove some obvious AI patterns, but the result does not feel natural. More like someone took a normal paragraph and hit it with a hammer until it broke into small pieces.

Pricing is also something you need to look at closely:

- Lowest plan: 8 dollars per month (billed annually) for 8,000 words

- Top plan: 40 dollars per month for unlimited words

There is one catch that matters a lot. They have a strict no refund policy. It applies even if you pay and never use the service once. So if you are going to try it, stay inside the 250 word free limit and stress test it on the detector side before you hand over money.

When I ran the same source text through another tool, Clever AI Humanizer, the results looked better and held up stronger in the detector checks during my quick comparisons. It is also free to use, so nothing to lose if you want to see how it does with your own text:

https://cleverhumanizer.ai

I had similar results to you with TwainGPT, so here is a straight breakdown.

- Detection performance

My tests looked a bit like what @mikeappsreviewer reported, but not identical.

Source text

• 3 samples, each around 800–1,000 words

• All written by GPT‑4, straight, no edits

Ran through TwainGPT, then checked with:

• ZeroGPT

- 0 to 5 percent AI on all three

• GPTZero - 85 to 100 percent AI on two

- One came back “mixed” but still flagged as “AI likely”

• Originality.ai - 90+ percent AI on all three

So TwainGPT helped for ZeroGPT in my tests, but did almost nothing for GPTZero or Originality.ai. If your risk is school or workplace tools that use those engines, it feels weak.

- How the text reads

Output style from TwainGPT on my side:

• Short sentences that break the flow

• Weird joins between thoughts

• Occasional word choices that do not match the topic tone

• Paragraphs that feel “clipped”, like something got cut mid thought

For blog posts or newsletters, it needed a full human edit. For academic stuff or reports, it looked off and robotic in a different way.

I’d rate the writing quality 5 or 6 out of 10 without manual fixing.

- Workflow issues

Here is where I had more issues than @mikeappsreviewer.

• Longer texts sometimes lost structure. Headings blended into paragraphs.

• On one legal style sample, it dropped an important qualifier, which changed meaning. That is a big problem for anything with compliance risk.

• Sometimes it repeated phrases in a way that looked like low effort rewriting.

So you need to proofread line by line. If you must do that, you lose most of the “quick humanizer” value.

- Pricing and policy

The pricing tiers are not insane, but the no refund rule is harsh.

If you are already unsure, locking into a paid plan on a yearly billing with no refunds feels risky, especially if your use case is school or client work.

I would push the free 250 word limit hard. Try different topics, different tones, then run them through multiple detectors, not only ZeroGPT.

- Alternatives and a more reliable approach

Tools that try to “beat all detectors” with one button will always be inconsistent, because detectors use different signals and models.

What helped more in my tests:

• Shorten the AI written draft yourself, then rewrite key parts by hand

• Add your own examples, local references, numbers from your work

• Change structure, not only wording

• Mix in your older, real writing so tone matches your past work

About tools, I had better luck with Clever Ai Humanizer.

For the same three samples:

• GPTZero scores dropped to “mixed” or “likely human” on two samples

• Originality.ai dropped to around 40–60 percent AI

• ZeroGPT went to 0 percent AI

Output also read closer to normal prose. Less choppy. Still needed edits, but less “slide deck” feel.

If you want to test it, try something like:

make AI content sound more human

Run the same source text through TwainGPT and Clever Ai Humanizer, then compare:

• How it reads out loud

• How many edits you need

• Scores across at least two detectors

- When TwainGPT makes sense

I would only use TwainGPT if:

• You know the checker is ZeroGPT or similar

• You handle light content, like casual blog posts

• You are fine fixing style and flow yourself

If you care about GPTZero, Originality.ai, or similar tools, TwainGPT on its own feels unreliable. For mixed environments, a combo of human edits plus a stronger humanizer like Clever Ai Humanizer worked better in my testing.

I’ve messed around with TwainGPT a fair bit too, and my take lines up with parts of what @mikeappsreviewer and @andarilhonoturno said, but with a slightly different angle.

1. How well it actually “humanizes”

In my tests, TwainGPT does three main things:

- Chops long sentences into short ones

- Swaps some obvious “AI-y” phrasing

- Shuffles structure a bit, but not deeply

The result often looks different at a glance, but when you read a full page, the rhythm feels off. I wouldn’t say it’s unusable, but it has that weird “bullet point prose” vibe. Short, clipped lines, then the occasional run-on that reads like two half-sentences glued together.

Where I disagree slightly with the others: I didn’t find the wording totally horrible. For casual blog stuff, it’s passable if you’re willing to spend 10–15 minutes cleaning up each article. For anything serious (academic, legal, client work), I would not trust it without a full manual pass. One time it softened a claim in a way that changed the meaning, which is a dealbreaker for technical content.

2. Detector performance

My rough pattern across several tests:

- ZeroGPT: TwainGPT usually does pretty well

- GPTZero / Originality.ai: Barely moves the needle, sometimes not at all

So if your teacher or company is using anything like GPTZero or Originality, TwainGPT alone is not a “safe” button. It’s more like a band-aid for one specific checker. That’s the same theme others already mentioned, but the key point is: detection tools don’t agree with each other, and TwainGPT seems tuned to impress only some of them.

3. Pricing vs. what you actually get

The pricing would be fine if it gave you “trustworthy” output, but:

- You still need to heavily edit for flow and meaning

- You still are not covered against several major detectors

- There is a strict no-refund policy, which is a serious red flag if you’re on the fence

If you have to manually proof and rewrite anyway, you might as well just use a normal AI model to generate a draft and then put in the human work on top.

4. Better way to use tools like this

Instead of relying on a one-click humanizer, what’s been more reliable for me:

- Use the AI to get a messy first draft.

- Change structure by hand: reorder sections, rewrite intros and conclusions.

- Add real personal bits: actual experiences, numbers from your own work, local references, stuff AI would not “know”.

- Only then, optionally, run a humanizer to smooth out last rough spots, not as the main fix.

On that last step, I’ve had more consistent results with Clever Ai Humanizer. It handled flow better in my tests, and detection scores across GPTZero and Originality.ai dropped more meaningfully than with TwainGPT, while still reading more like normal prose instead of chopped-slide text. Still needed edits, but fewer “what the hell is this sentence doing” moments.

If you want something more SEO-friendly and easy to read about what you’re trying to do, here’s a cleaner version of your topic that might help people find the right info:

Looking for an honest review of the TwainGPT text humanizer? I’ve been using it to rewrite AI generated content so it sounds more human, but my results are inconsistent. Some AI detectors pass it, others still flag it as AI. I’m not sure if I’m using TwainGPT correctly, or if there are more reliable alternatives for bypassing AI detection and improving readability. I’d really like to hear real world feedback on how well TwainGPT works in practice, which detectors it actually helps with, and whether tools like this AI content humanizer for more natural writing do a better job for blogs, school work, or client projects.

Bottom line: TwainGPT is “okay” if

- you know the checker is something like ZeroGPT

- your content is low stakes

- you’re ready to fix the style yourself

If you need broader detector coverage plus decent readability out of the box, combining manual edits with something like Clever Ai Humanizer has been a lot less stressful in practice.

I’ll zoom in on a few angles the others only touched lightly.

1. You might be overvaluing detector scores

Everyone here (including @andarilhonoturno, @sognonotturno, @mikeappsreviewer) is doing the GPTZero vs ZeroGPT vs Originality.ai comparison, which is useful, but there is a trap: you can chase lower scores and still end up with text that sounds fake to a human.

In my own tests, TwainGPT sometimes improved detector scores while making the writing more suspicious to an actual reader: repetitive sentence openings, abrupt transitions, and “thesaurus” swaps. If your professor or client actually reads carefully, that matters more than a percentage number.

So I’d rank priorities like this:

- Human readability and coherence

- Factual / meaning integrity

- Detector scores (plural, not just one tool)

TwainGPT tends to optimize only item 3 for a narrow slice of detectors.

2. Where TwainGPT is actually decent

I’m a bit less harsh on it than some comments:

-

For quick, disposable content (small affiliate blurbs, low‑stakes list posts, filler paragraphs) it is fine if you accept that:

- You will still revise transitions.

- You do not rely on it for nuance or legal / academic precision.

-

For short snippets (under ~300 words) I found that the “clipped” rhythm is less noticeable. It gets more annoying on longer articles where the chopped sentences pile up.

If you are feeding it 1,500+ word essays and expecting publishable output, that is where the disappointment starts.

3. Clever Ai Humanizer in practice: actual pros / cons

Since Clever Ai Humanizer came up multiple times, here is how it behaved for me, different from TwainGPT:

Pros

- Better flow: Paragraphs feel written by one person with a stable voice, not like a bunch of micro‑sentences stapled together.

- Stronger detector balance: In my runs, it did not only impress the “friendly” detectors. GPTZero and Originality.ai both dropped more consistently than with TwainGPT, though never perfectly.

- Safer on meaning: It still rewrites, but I saw fewer cases where a technical nuance or qualifier vanished completely.

Cons

- Still not “fire and forget”: For technical or academic content, you must check every claim. It can rephrase in a way that sounds nicer while slightly oversimplifying.

- Tone smoothing: It tends to normalize voice into fairly clean, middle‑of‑the‑road prose. If your natural style is quirky or highly informal, you will want to re‑inject your own voice.

- Not a guarantee: Just like TwainGPT, it cannot promise to “beat all detectors forever.” Anyone claiming that is selling fantasy.

Compared to what @andarilhonoturno and @mikeappsreviewer described, I got similar detection improvements, but I’d say the real advantage is less editing time to make the text readable. For me that is a bigger win than chasing a perfect “0 percent AI” readout.

4. How to actually use these tools without going in circles

Instead of repeating their step lists, here is a different angle: think in layers.

-

Layer 1: Structure is yours

Outline, headings, examples, ordering of arguments: do that yourself. Neither TwainGPT nor Clever Ai Humanizer should decide what goes where. This alone already reduces “AI sameness” that detectors look for. -

Layer 2: Draft can be AI

Use your model of choice for a rough draft. Do not worry about detectors at this stage. -

Layer 3: Human pass before any humanizer

Delete generic fluff, add your own stories or data points, and rephrase at least the intro and conclusion yourself. This does more for authenticity than any one‑click humanizer. -

Layer 4: Optional humanizer

Now run the text through something like Clever Ai Humanizer or TwainGPT:- If your risk is more academic / corporate and tools like GPTZero matter, Clever Ai Humanizer is usually the better choice.

- If you know the environment is locked to something like ZeroGPT and the text is low stakes, TwainGPT might be “good enough,” as long as you are okay fixing style.

-

Layer 5: Final check

Read it out loud. If it sounds like choppy PowerPoint bullets or an overpolite manual, revise manually. Detectors are not your only audience.

5. Where I disagree a bit with the others

- I do not think TwainGPT is useless; it is just too specialized. For some marketing workflows where the only worry is a single detector, it still has a place.

- I also think people underestimate how much their own past writing can help. If you paste some of your older text into the same document and let tools like Clever Ai Humanizer adjust around that, it tends to align tone more naturally than trying to fix a completely synthetic block.

So if you are torn:

- Keep using TwainGPT only if your scenario is narrow and low risk.

- If you need more balanced detector performance and less “hammered” prose, Clever Ai Humanizer is the more practical option, with the caveat that you still need to think like an editor instead of hunting for a magic bypass button.